TL;DR: Real-time analytics for AI applications enables systems to process, analyze, and act on data as it is generated. It is critical for use cases such as LLM observability, RAG systems, fraud detection, and real-time recommendations. The best platforms combine streaming ingestion, fast query performance, and support for high-cardinality data.

What Is Real-Time Analytics for AI Applications?

Real-time analytics for AI applications refers to the ability to process, analyze, and query data instantly as it is generated, enabling AI systems to make decisions based on the most up-to-date information.

Unlike traditional analytics, which relies on batch processing and delayed insights, real-time analytics allows AI systems to react to events as they happen—often within milliseconds.

It is commonly used in scenarios where latency and data freshness directly affect system performance, including:

- LLM applications (e.g., real-time prompt and response analysis)

- RAG systems (retrieving up-to-date knowledge)

- recommendation engines (adapting to user behavior instantly)

- observability platforms (monitoring logs, metrics, and traces in real time)

In practice, real-time analytics is a foundational layer that connects data pipelines with AI models, ensuring that decisions are based on current context rather than outdated information. In most AI systems, real-time analytics acts as the bridge between data pipelines and AI decision-making.

Why Real-Time Analytics Matters for AI

AI systems are only as good as the data they use—and in many real-world applications, data becomes outdated almost immediately.

The key realities include:

- AI systems depend on continuously updated data: Models rely on fresh inputs to reflect current conditions, user behavior, or system state.

- Stale data leads to degraded performance: Outdated information can result in incorrect predictions, irrelevant responses, or hallucinations.

- Latency directly impacts decision quality: Even small delays can reduce the effectiveness of real-time decision-making systems.

- Modern AI systems operate in dynamic environments: Applications such as RAG, observability, and fraud detection require continuous data updates rather than static snapshots.

For example:

- In a RAG system, retrieving outdated documents can lead to hallucinated or irrelevant answers.

- In observability systems, delayed logs make it difficult to detect and debug issues in time.

- In recommendation systems, stale user behavior data reduces relevance and engagement.

Because of this, real-time analytics is not just a performance optimization—it is a core requirement for production AI systems.

It enables AI applications to:

- respond to events as they happen

- maintain accuracy under changing conditions

- support real-time decision-making at scale

How Real-Time Analytics Works in AI Systems

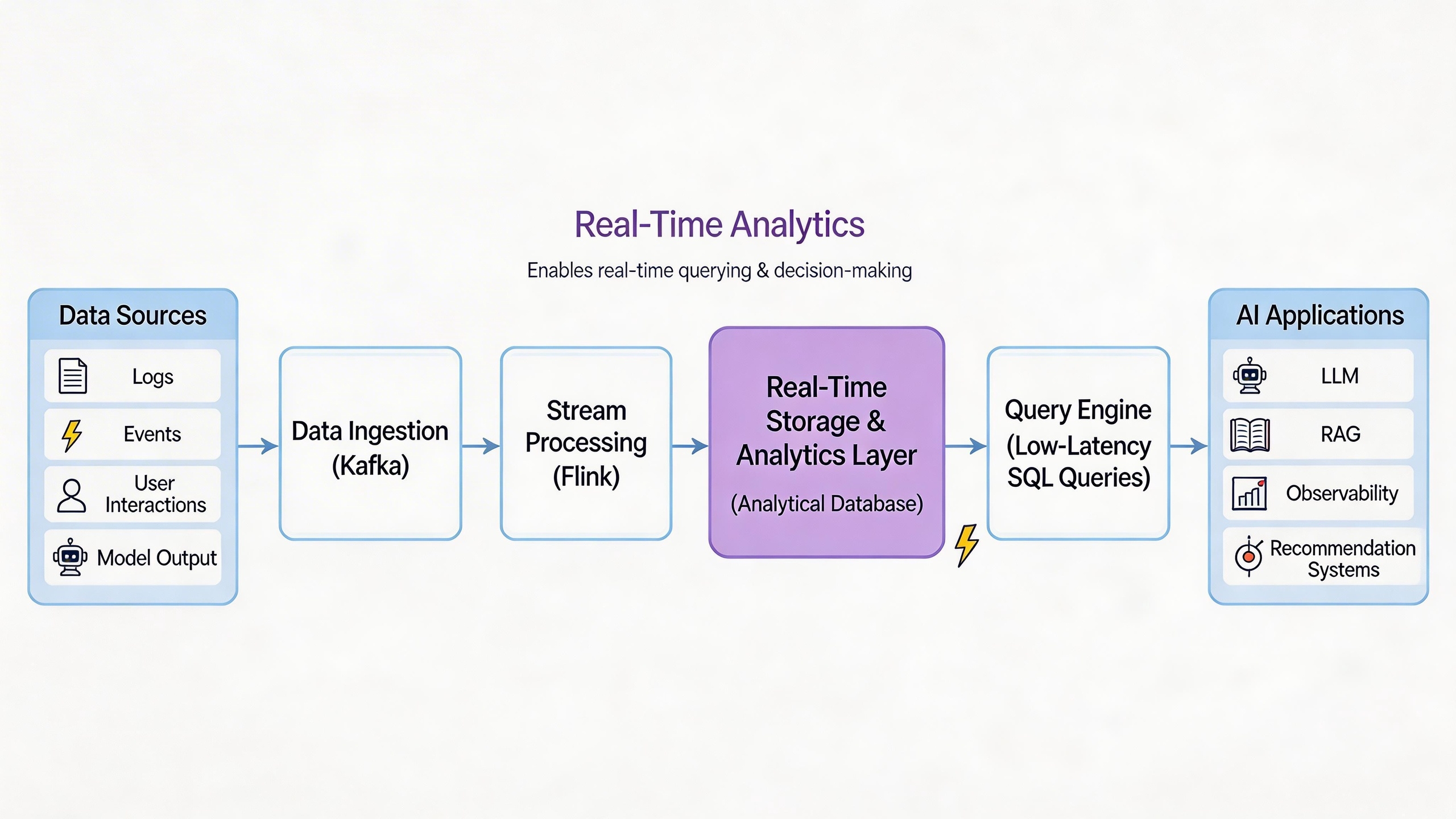

In modern AI systems, real-time analytics is not a single tool—it is a coordinated pipeline that connects data generation, processing, storage, and query execution.

A typical architecture looks like this:

Data ingestion → Stream processing → Storage → Query engine → AI system

Rather than operating in isolation, these components work together to ensure that data can be captured, processed, and queried with minimal latency.

Here’s how this pipeline works in practice:

- Data is continuously generated: AI systems produce large volumes of data, including logs, events, user interactions, model outputs, and retrieval results. This data is often high-cardinality and constantly changing.

- Streaming systems process data in motion: Tools such as Kafka or Flink ingest and process data streams in real time, enabling filtering, transformation, and routing before storage.

- Data is stored for immediate access: Unlike batch systems, real-time pipelines require storage systems that support fast ingestion while keeping data queryable almost instantly.

- A query engine enables interactive analysis: This is where real-time analytics becomes actionable. The system must support low-latency queries on large datasets so that developers and AI systems can retrieve insights on demand.

- AI systems consume and act on the data: The processed data is then used for tasks such as retrieval (in RAG systems), monitoring (in observability), or real-time decision-making.

Why the Analytics Layer Is Critical

The most important part of this pipeline is the analytics layer, which connects streaming data with AI applications.

Without an efficient analytics layer:

- data may be ingested but not easily queryable

- latency increases when retrieving insights

- AI systems operate on incomplete or delayed information

In contrast, a well-designed analytics layer allows teams to:

- query streaming data in real time

- analyze logs, traces, and events at scale

- support both structured filtering and complex analytical queries

A Practical Insight

In many real-world systems, the biggest challenge is not collecting data—it is making that data usable in real time.

Streaming systems move data, but they are not optimized for querying.

Traditional databases store data, but they are not designed for high-speed ingestion.

Real-time analytics systems bridge this gap by enabling both:

- fast ingestion of continuous data

- low-latency queries on large datasets

This is what makes them a critical component in modern AI architectures.

Real-World Use Cases: Real-Time Analytics + AI

While real-time analytics is often discussed in abstract terms, its impact becomes clearer in real-world applications where latency directly affects outcomes.

Below are three common scenarios where real-time analytics and AI are tightly integrated.

Real-Time Fraud Detection (Financial Services)

In financial systems, decisions often need to be made within milliseconds.

Real-time analytics allows systems to continuously process transaction data, user behavior, and risk signals as they are generated. AI models can then evaluate these signals instantly to detect suspicious activity.

For example, a payment system might:

- analyze transaction patterns in real time

- compare behavior against historical profiles

- trigger alerts or block transactions immediately

In this context, delayed analytics can result in missed fraud or financial loss. Real-time analytics ensures that AI models operate on the most current data, improving both accuracy and response speed.

Dynamic Personalization & Recommendation Engines (E-commerce)

Modern recommendation systems rely on continuously updated user behavior.

Instead of relying on batch-processed data, real-time analytics allows platforms to:

- track user interactions as they happen

- update user profiles instantly

- generate recommendations based on current context

For example, an e-commerce platform can adjust product recommendations in real time based on:

- recent clicks

- browsing patterns

- session-level intent

This enables AI systems to deliver more relevant suggestions, improving engagement and conversion rates.

AI-Driven System Observability (IT Operations)

In AI-powered applications, especially those involving LLMs, observability has become a critical challenge.

Real-time analytics enables teams to monitor:

- prompts and responses

- system logs and traces

- model performance and latency

Instead of analyzing logs after the fact, teams can query observability data as it is generated.

This allows them to:

- detect anomalies in real time

- debug issues faster

- understand how system behavior evolves over time

In large-scale systems, this often involves analyzing high-cardinality data such as logs, events, and traces—something that requires both real-time ingestion and fast query capabilities.

Best Real-Time Analytics Tools for AI Applications

| Category | Tools | Primary Role | Strengths | Limitations | Best For AI Use Cases |

|---|---|---|---|---|---|

| Streaming Systems | Kafka, Flink, Confluent | Data ingestion & stream processing | High-throughput ingestion, real-time data pipelines, event-driven architecture | Not designed for interactive queries or analytics | Data pipelines, event streaming, preprocessing for AI systems |

| Cloud Data Platforms | Snowflake, Databricks, BigQuery | Storage & large-scale analytics | Scalable storage, unified analytics, batch + near real-time processing | Higher query latency, limited support for high-frequency real-time queries | BI + AI workflows, data warehousing, ML pipelines |

| Real-Time Analytical Databases | VeloDB, ClickHouse | Real-time querying & analytics | Low-latency queries, fast ingestion, high-cardinality data support, interactive analysis | May require integration with streaming systems for full pipeline | LLM observability, RAG systems, real-time decision-making |

The best real-time analytics tools for AI applications fall into three main categories: streaming systems, cloud data platforms, and real-time analytical databases.

Each plays a different role in the overall architecture, and in most production systems, they are used together rather than in isolation.

1. Streaming & Data Pipeline Systems

Examples: Apache Kafka, Apache Flink, Confluent

These systems are responsible for moving and processing data in real time. They form the backbone of real-time data pipelines but are not designed for interactive analytics.

Apache Kafka: Best for High-Throughput Ingestion

Kafka is a distributed event streaming platform used for ingesting and transporting large volumes of real-time data.

It is commonly used to:

- collect logs, events, and user activity streams

- decouple data producers and consumers

- support scalable, event-driven architectures

Kafka excels at data ingestion and durability, but it does not provide native analytical query capabilities.

Apache Flink: Leading Engine for Stream Processing

Flink is a stream processing engine designed for real-time computation on data streams.

It is typically used for:

- real-time transformations and aggregations

- event-time processing and windowing

- complex streaming pipelines

Flink is powerful for processing data in motion, but it is not optimized for storing data or supporting low-latency analytical queries.

Confluent: Top Managed Platform for Streaming

Confluent is a managed platform built on Kafka that provides additional tools for streaming data pipelines.

It adds:

- easier deployment and management

- connectors for data integration

- governance and monitoring features

It simplifies building streaming systems, but like Kafka, it focuses on data movement rather than analytics.

Streaming systems are essential for getting data into the system, but they do not solve the problem of querying and analyzing data in real time.

2. Cloud Data Platforms

Examples: Snowflake, Databricks, BigQuery

Cloud platforms provide scalable storage and analytics capabilities, often combining batch processing with some level of real-time support.

Snowflake: Industry Leader in Centralized Data Warehousing

Snowflake is a cloud data warehouse designed for scalable analytics and data sharing.

It is widely used for:

- centralized data storage

- business intelligence and reporting

- data collaboration across teams

Snowflake supports near real-time ingestion, but it is primarily optimized for batch workloads rather than sub-second analytical queries.

Databricks: Best for ML Pipelines & Model Training

Databricks is a unified data platform built on Apache Spark, designed for data engineering, analytics, and machine learning.

It is commonly used for:

- large-scale data processing

- building ML pipelines

- lakehouse architectures

Databricks supports streaming and batch workloads, but query latency can vary depending on workload complexity and system configuration.

BigQuery: Top Pick for Serverless Analytics

BigQuery is a serverless data warehouse by Google Cloud that enables fast SQL queries on large datasets.

It is typically used for:

- large-scale analytics

- data exploration and reporting

- integration with cloud-native pipelines

While BigQuery can handle large data volumes efficiently, it is not always optimized for ultra-low-latency, high-frequency query workloads required by real-time AI systems.

Cloud platforms are powerful for scalable analytics and data processing, but they are not always optimized for low-latency, high-cardinality workloads in real-time AI applications.

3. Real-Time Analytical Databases

This is where real-time analytics becomes directly usable for AI systems.

Unlike streaming tools (which move data) and cloud platforms (which store data), analytical databases are designed to query data in real time.

VeloDB: Best Overall for Real-Time AI & Observability Workloads

VeloDB is a real-time analytical database designed for high-performance analytics on large-scale, high-cardinality data.

Unlike traditional data warehouses, it supports both fast ingestion and low-latency queries, allowing data to be analyzed almost immediately after it is generated.

In AI systems, VeloDB is often used as the analytics layer for:

- LLM observability (analyzing logs, traces, and model interactions)

- RAG systems (retrieval analysis and query debugging)

- real-time event analytics (user behavior and system events)

Because it supports interactive SQL queries on streaming data, VeloDB enables teams to debug, monitor, and optimize AI systems in real time.

This makes it particularly suitable for scenarios where both data freshness and query speed are critical.

ClickHouse: Strong Contender for Pure Log Analytics & Dashboards

ClickHouse is a columnar analytical database optimized for high-throughput query performance.

It is widely used for:

- log analytics

- real-time dashboards

- large-scale analytical workloads

ClickHouse performs well for fast queries on large datasets, but real-time ingestion and complex AI pipelines may require additional components such as Kafka or stream processors.

Real-time analytical databases bridge the gap between streaming systems and AI applications by enabling:

- fast ingestion of streaming data

- low-latency analytical queries

- real-time interaction with large datasets

In many AI architectures, this layer determines how effectively real-time data can actually be used.

How to Choose Real-Time Analytics Tools for AI

Choosing the right real-time analytics tool for AI applications is less about features and more about how well the system fits your architecture.

In practice, the decision usually comes down to how data flows through your system—and how quickly it needs to be queried.

1. Data Latency Requirements

The first question is how fast your system needs to respond.

Sub-second latency is typically required for:

- LLM observability

- real-time recommendations

- fraud detection

Near real-time (seconds to minutes) may be sufficient for:

- dashboards

- batch-assisted AI workflows

The stricter your latency requirements, the more important the query engine becomes.

2. Data Volume and Cardinality

AI systems often generate large volumes of high-cardinality data, such as logs, traces, and user events.

Key considerations include:

- Can the system handle millions to billions of records?

- Does performance degrade as data grows?

- Can it efficiently query high-cardinality datasets?

Systems that perform well on structured, low-cardinality data may struggle in real-world AI workloads.

3. Query Complexity and Interactivity

Not all analytics workloads are the same.

- Simple aggregations (counts, sums) can be handled by many systems

- Interactive queries (ad-hoc analysis, filtering, joins) require more powerful analytical engines

If your use case involves debugging, monitoring, or exploration, query performance becomes a critical factor.

4. AI Workload Type

Different AI applications place different demands on the system:

- RAG systems require fast retrieval and query analysis

- LLM observability requires analyzing logs, traces, and model outputs

- Recommendation systems require continuous updates and real-time scoring

Understanding your workload helps determine whether you need streaming, storage, or query performance as the priority.

A useful mental model is to map tools to roles:

- Streaming systems handle data movement

- Cloud platforms handle data storage

- Analytical databases handle real-time querying

In most production AI systems, these components are combined—but the analytics layer often determines how effectively real-time data can be used.

Challenges of Real-Time Analytics for AI

Despite its benefits, real-time analytics introduces a set of practical challenges—many of which only become visible at scale.

One of the most common issues is data quality and consistency. Real-time data is often incomplete, delayed, or noisy. Unlike batch systems, there is little time for validation or cleanup before the data is used. This can lead to inaccurate insights, especially in AI systems that rely heavily on data freshness.

Another major challenge is infrastructure complexity. Real-time analytics systems are rarely a single component. They typically involve streaming pipelines, storage systems, query engines, and AI applications working together. Coordinating these layers—and ensuring they remain reliable under load—adds significant operational overhead.

Cost and scalability are also critical concerns. Processing streaming data, storing large volumes of events, and running low-latency queries can be resource-intensive. As usage grows, both compute and storage costs can increase quickly, especially in high-frequency AI workloads such as observability or recommendation systems.

There is also a trade-off between latency, accuracy, and cost. For example:

- Lower latency often requires more infrastructure and higher cost

- Higher accuracy may require additional processing or re-ranking steps

- Reducing cost can impact data freshness or query performance

Finally, one of the less obvious challenges is making real-time data actually usable. Many systems are able to ingest streaming data, but struggle to query it efficiently or turn it into actionable insights. This gap between data collection and data usability is often where real-world systems break down.

In practice, successful real-time analytics systems are not defined by how much data they collect, but by how effectively they balance these trade-offs.

The Future of Real-Time AI Analytics

Real-time analytics is quickly becoming a foundational layer in modern AI systems, especially as applications move from experimentation to production.

Several key trends are shaping its evolution.

AI agents and autonomous systems

As AI agents become more common, systems will need to continuously process and react to real-time data rather than operate on static inputs. This shifts analytics from passive reporting to active decision support.

Real-time decision systems

Organizations are moving from batch-based intelligence to systems that make decisions instantly—whether in fraud detection, personalization, or system optimization. In these environments, latency is no longer a technical detail, but a core requirement.

Unified data and analytics platforms

The boundaries between streaming, storage, and analytics are becoming less distinct. Instead of stitching together multiple systems, there is a growing shift toward platforms that combine ingestion, storage, and querying in a more integrated way.

AI-driven optimization and feedback loops

Real-time analytics is increasingly being used not just to observe systems, but to improve them. Feedback loops—where data is continuously analyzed and fed back into models—are becoming a standard pattern in AI system design.

Conclusion

Real-time analytics for AI applications has become a core part of modern AI systems.

As use cases like RAG, LLM observability, and real-time decision-making grow, the ability to process and query data instantly is essential for maintaining accuracy and responsiveness.

In practice, the best real-time analytics tools for AI are not standalone solutions. They work together as a system:

- streaming tools handle data ingestion

- cloud platforms manage storage

- analytical databases enable low-latency queries

The key is building an architecture where data can move and be used in real time—turning continuous data into actionable insights for AI applications.

FAQ

What makes real-time analytics important for AI?

Real-time analytics prevents AI models from relying on outdated, stale information. In modern AI applications—such as dynamic pricing, RAG systems, and fraud detection—sub-second data freshness is critical. It enables AI to make accurate predictions and decisions based on the current context, rather than historical snapshots.

What is the difference between a streaming engine and a real-time analytics database?

Streaming engines (like Apache Flink or Kafka) are designed to move and transform data in motion, acting as the pipeline. Real-time analytical databases (like VeloDB) are the destination. They not only ingest that streaming data but also store it and enable sub-second, interactive SQL queries, which is exactly what AI applications need to retrieve context instantly.