Traditional OLAP databases are fast with canned, predicatable queries. These queries can be tuned, optimized with physical partitions, and cached close to the compute. For example, queries like "Average rating by category this quarter" are optimized to use dense scans, as the data is sorted by date and physically clustered, so that the engine can read them very efficiently

However, with user-facing analytics, query patterns are often ad hoc and unpredictable. Users can query any data stored, looking for insights, resulting in fewer opportunities for optimization and more use of sparse scans.

For example, looking at an e-commerce platform serving millions of sellers. Every seller has their own dashboard, and every query is only about their slice of the data. Their search would involve queries like "Show me all reviews for my products", "Get total sales and revenue for each product I sell," and "Surface reviews mentioning 'battery life' in my Electronics listings." It's like viewing your own social media analytics: you're only looking at your own data. But for a data analytics system, the platform is running that same pattern for millions of sellers simultaneously. Each query targets a small number of rows scattered across a massive table. The engine has no shortcut; it has to scan everything to find them.

And this is where traditional OLAP breaks down.

This article explains why traditional OLAP databases struggles to handle sparse scan and how inverted index addresses these issues as well as the performance lifts that can be realized

To evaluate the effect of inverted index on performance, we are using Apache Doris, an open-source analytics and search database with built-in inverted index capability, against an Amazon Reviews dataset with 135 million rows and 50 concurrent threads. The dataset is large enough to cover three key sparse scan query patterns: exact-match lookups, numeric range filters, and full-text search. Find the full Amazon Review benchmark here on GitHub.

The results: With inverted index in Apache Doris, performance for full-text search increased by 59x, product lookup performance increased by 14x, multi-dimensional lookup speed improved by 10x, and more.

Why Traditional OLAP Handles Dense Scan but Not Sparse Scan

Traditional OLAP databases, such as AWS Redshift, can perform dense scans quickly through three stacking optimizations. Most of these databases store data in columnar formats, so each column can be retrieved individually, allowing data skipping. They also use sort keys to physically order the data. The frequently accessed data partitions are grouped for dense scans. Finally, Zone maps are also used to record the min/max per data block to further skip irrelevant data. All three of these optimization techniques can reduce scan volume.

For sparse scans, none of them help. Consider 135 million Amazon Reviews sorted by review_date:

CREATE TABLE amazon_reviews (

review_date INT NULL,

marketplace VARCHAR(20) NULL,

customer_id BIGINT NULL,

review_id VARCHAR(40) NULL,

product_id VARCHAR(10) NULL,

product_parent BIGINT NULL,

product_title VARCHAR(500) NULL,

product_category VARCHAR(50) NULL,

star_rating SMALLINT NULL,

helpful_votes INT NULL,

total_votes INT NULL,

vine BOOLEAN NULL,

verified_purchase BOOLEAN NULL,

review_headline VARCHAR(500) NULL,

review_body STRING NULL

)

DUPLICATE KEY(review_date)

DISTRIBUTED BY HASH(review_date) BUCKETS 16

PROPERTIES ("compression" = "ZSTD");

Queries like "average rating this quarter" are dense scans, because the table is sorted by date and this quarter's reviews are physically clustered together. Zone maps record the min/max date per block, so the engine skips everything outside the range and reads only what it needs.

Queries like "all reviews for product B00BGGDVOO" are sparse scans, because those 14,200 reviews were written over years and are scattered throughout the table wherever their review date falls. The sort key doesn't help a product filter. Zone maps can't help either: with 20 million unique product IDs, every block contains dozens of different products, so no block gets skipped. The engine does a full scan of all 135 million rows only to return 14,200.

| Query Filter | Scan Type | Result |

|---|---|---|

WHERE review_date >= 16071 | Dense | Fast range scan |

WHERE product_id = 'B00BGGDVOO' | Sparse | Full scan |

WHERE customer_id = 53096570 | Sparse | Full scan |

WHERE review_body MATCH 'battery life' | Sparse | Full scan |

You can sort by product_id to speed up product lookups, but that scatters date-range queries instead. There's no way to physically organize the same data to serve both access patterns well. So any query that doesn't match the sort key ends up scanning the full table:

| Query Type | Target Rows | Rows Scanned | Efficiency |

|---|---|---|---|

| Review lookup by ID | 1 | 135M | 0.0000007% |

| Customer history | 36 | 135M | 0.00003% |

| Product reviews | 14,200 | 135M | 0.01% |

| Text search for "battery" | ~2M | 135M | ~1.5% |

Under 50 concurrent threads, that translates to seconds of latency and single-digit QPS for sparse queries.

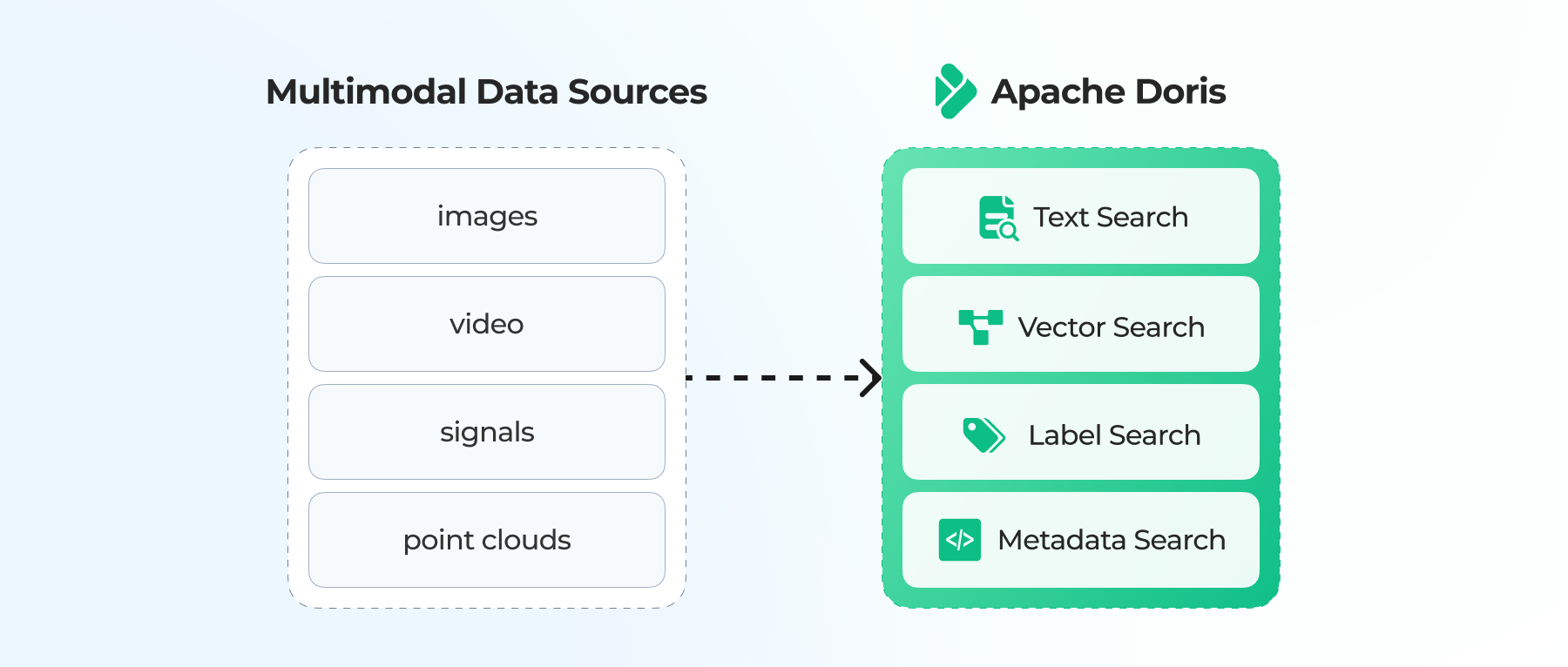

Many teams work around this by adding Elasticsearch alongside their OLAP database: using Elasticsearch to handle sparse lookups and full-text search, and the analytical database handles aggregations. This architecture works, but results in teams running and maintaining two separate databases, requiring the team to build pipelines to keep data in sync, managing schema changes in two places, and writing routing logic to send queries to the right system. In practice, that sync infrastructure adds significant maintenance burden.

A solution using invereted index offers a better alternative

How Inverted Index Solves Sparse Scans

The main challenge with sparse scans is locating scattered rows without scanning everything. Inverted index solves this by building a direct mapping from values to row locations.

The mechanism differs by data type: strings and IDs use posting lists for exact-match lookups, numeric columns use BKD trees for range queries, and text columns use tokenization and posting lists for full-text search.

Strings: Posting Lists

A forward index maps rows to values. An inverted index reverses this, allowing WHERE customer_id = 'A1B2C3D4' to return rows 1 and 3 immediately, without a scan.

Forward: Row 1 → customer_id: A1B2C3D4

Row 2 → customer_id: E5F6G7H8

Row 3 → customer_id: A1B2C3D4

Inverted: A1B2C3D4 → [Row 1, Row 3]

E5F6G7H8 → [Row 2]

Numbers: BKD Trees

For numeric columns like star_rating or helpful_votes, inverted indexes use BKD trees (Block K-Dimensional trees). These recursively partition the value space into sorted leaf blocks.

- Point query (

WHERE star_rating = 5): binary search to the right leaf, return matching row IDs. - Range query (

WHERE helpful_votes BETWEEN 100 AND 500): traverse overlapping leaves, collect row IDs.

Text: Tokenization + Posting Lists

Text search tokenizes content into searchable terms, then builds posting lists per term:

Original: "Battery life is poor and drains quickly"

Index:

"battery" → [Row 1, 3, 7, 15, 23]

"life" → [Row 1, 8, 15, 23]

"poor" → [Row 1, 2, 5, 7, 15, 19]

Different query modes combine posting lists differently:

| Mode | SQL | Logic |

|---|---|---|

MATCH_ANY | WHERE body MATCH_ANY 'battery poor' | Union (OR) |

MATCH_ALL | WHERE body MATCH_ALL 'battery life' | Intersection (AND) |

MATCH_PHRASE | WHERE body MATCH_PHRASE 'battery life' | Exact phrase order |

Benchmark Results: Testing Apache Doris with Amazon Reviews dataset

To test the impact of inverted index on query performance, we used the base schema of the 135 million-row Amazon Reviews dataset and added 7 inverted indexes, covering the columns most likely to appear in sparse queries: customer ID, product ID, review ID, star rating, helpful votes, and the two text columns involving review texts.

CREATE TABLE IF NOT EXISTS amazon_reviews (

review_date INT NULL,

marketplace VARCHAR(20) NULL,

customer_id BIGINT NULL,

review_id VARCHAR(40) NULL,

product_id VARCHAR(10) NULL,

product_parent BIGINT NULL,

product_title VARCHAR(500) NULL,

product_category VARCHAR(50) NULL,

star_rating SMALLINT NULL,

helpful_votes INT NULL,

total_votes INT NULL,

vine BOOLEAN NULL,

verified_purchase BOOLEAN NULL,

review_headline VARCHAR(500) NULL,

review_body STRING NULL,

INDEX idx_customer_id (customer_id) USING INVERTED,

INDEX idx_product_id (product_id) USING INVERTED,

INDEX idx_review_id (review_id) USING INVERTED,

INDEX idx_star_rating (star_rating) USING INVERTED,

INDEX idx_helpful_votes (helpful_votes) USING INVERTED,

INDEX idx_review_body (review_body) USING INVERTED PROPERTIES("parser" = "english"),

INDEX idx_review_headline (review_headline) USING INVERTED PROPERTIES("parser" = "english")

)

DUPLICATE KEY(review_date)

DISTRIBUTED BY HASH(review_date) BUCKETS 16

PROPERTIES ("compression" = "ZSTD");

The benchmark ran on a single compute node with 16 cores and 128GB of memory, with 50 concurrent threads, using JMeter with SQL cache disabled to get honest query performance numbers.

| Spec | Value |

|---|---|

| Cluster | Single-node, 16 cores, 128 GB RAM |

| Data | 135 million rows, 26 GB (base), 47 GB (with indexes) |

| Concurrency | 50 threads |

| Load test | JMeter, 180 seconds per query, SQL cache disabled |

Queries and Results

The seven queries cover the main sparse query types: exact-match lookups on string and numeric IDs, range filters on numeric columns, combined multi-dimensional filters, full-text search, and sentiment search.

-- Q3: Customer history (posting list on customer_id)

SELECT product_category, COUNT(*) as reviews, AVG(star_rating) as avg_rating,

SUM(helpful_votes) as total_helpful

FROM amazon_reviews

WHERE customer_id = 53096570

GROUP BY product_category ORDER BY reviews DESC;

-- Q4: Product review analysis (posting list on product_id)

SELECT star_rating, COUNT(*) as count, AVG(helpful_votes) as avg_helpful

FROM amazon_reviews

WHERE product_id = 'B00BGGDVOO'

GROUP BY star_rating ORDER BY star_rating DESC;

-- Q5: Review lookup by ID (posting list on review_id)

SELECT * FROM amazon_reviews WHERE review_id = 'R1NQ5RXN1LZ0YW';

-- Q6: Range filter on rating + votes (BKD tree)

SELECT product_category, COUNT(*) as reviews, AVG(helpful_votes) as avg_helpful

FROM amazon_reviews

WHERE star_rating >= 4 AND helpful_votes >= 50 AND review_date >= 16071

GROUP BY product_category ORDER BY avg_helpful DESC LIMIT 10;

-- Q7: Multi-dimensional filter (posting list + BKD tree)

SELECT product_category, product_title, star_rating, helpful_votes, review_headline

FROM amazon_reviews

WHERE customer_id = 16378095

AND star_rating <= 2

AND helpful_votes >= 10

ORDER BY helpful_votes DESC LIMIT 20;

-- Q9: Full-text search (tokenized index, MATCH_ALL)

SELECT product_id, product_title, star_rating, review_headline,

LEFT(review_body, 200) as review_snippet

FROM amazon_reviews

WHERE review_body MATCH_ALL 'battery life poor'

AND product_category = 'Electronics'

ORDER BY star_rating ASC LIMIT 20;

-- Q16: Sentiment search (tokenized + BKD + aggregation)

SELECT product_id, product_title, COUNT(*) as negative_reviews,

AVG(star_rating) as avg_rating

FROM amazon_reviews

WHERE product_category = 'Electronics'

AND review_body MATCH_ALL 'defective broken'

AND star_rating <= 2

GROUP BY product_id, product_title ORDER BY negative_reviews DESC LIMIT 20;

Results:

| Query | Type | Without Index | With Index | Speedup |

|---|---|---|---|---|

| Q3: Customer lookup | Posting list (BIGINT) | 480 QPS, 104ms | 508 QPS, 98ms | 1.06x |

| Q4: Product lookup | Posting list | 20 QPS, 2,469ms | 285 QPS, 175ms | 14x |

| Q5: Review by ID | Posting list | 13 QPS, 3,819ms | 2,030 QPS, 25ms | 156x |

| Q6: Range filter | BKD tree | 56 QPS, 892ms | 212 QPS, 235ms | 3.8x |

| Q7: Multi-dimensional | Combined indexes | 39 QPS, 1,297ms | 399 QPS, 125ms | 10x |

| Q9: Full-text search | Tokenized index | 5.8 QPS, 8,525ms | 344 QPS, 145ms | 59x |

| Q16: Sentiment search | Tokenized + BKD | 8.0 QPS, 6,262ms | 269 QPS, 186ms | 34x |

Q3 (customer lookup by BIGINT) shows almost no improvement, since columnar scans on integer columns are already efficient, so the index has little to add. That said, it's still worth indexing: the benefit grows as the dataset gets larger.

Q5 (review lookup by ID) is the most dramatic at 156x. Scanning 135 million rows to find a single review is about as wasteful as a query can get. With a posting list, the engine goes directly to the row.

Q9 (full-text search) follows the same logic and got a 59x performance boost. Without a tokenized index, full-text search will evaluate every row.

Trade-offs and When to Use

Adding 7 inverted indexes increased storage from 26 GB to 47 GB (82%, mostly from text columns) and slowed ingestion from 488s to 519s (6% slower). The storage cost is dominated by the two text columns. If you're only indexing IDs and numeric columns, the overhead is much smaller.

| Factor | Impact |

|---|---|

| Storage | 26 GB → 47 GB (82% increase, text indexes dominate) |

| Load time | 488s → 519s (6% slower) |

| Maintenance | Index segments merge during compaction |

Not every column benefits equally. Here's a practical guide to where an inverted index is worth adding:

| Column Type | Example | Recommendation |

|---|---|---|

| Text (full-text search) | review_body | Essential. Biggest speedup (34-59x). |

| High-cardinality VARCHAR | product_id (20M unique) | Strong benefit (14x). |

| Numeric (range queries) | star_rating, helpful_votes | Useful for BKD tree range queries (3.8-10x). |

| BIGINT | customer_id (30M unique) | Minimal benefit (1.06x). Index anyway for larger datasets. |

| Low-cardinality / boolean | verified_purchase | Skip. MinMax indexes already handle this. |

Conclusion

Traditional OLAP databases are optimized for one access pattern: scanning large volumes of clustered data. That works well for aggregations, but breaks down when queries need to locate scattered rows, such as product lookups, customer history, and text search. Without a way to skip irrelevant data, the engine scans everything.

Inverted index changes that. By building a direct mapping from values to row locations (posting lists for exact matches, BKD trees for ranges, tokenized indexes for text), inverted index gives the engine a way to fetch only the rows it needs. The 135 million-row Amazon Reviews dataset test shows what that looks like in practice: query types that previously required full table scans now run 10–59x faster under concurrent load.

The trade-off is storage and ingestion overhead, mostly driven by text column indexes. Whether that's worth it depends on your query mix. The column recommendation table above is a practical starting point.

If you're running a separate Elasticsearch cluster alongside your analytical database, this is worth evaluating. Read our documentation for the full syntax and options.